Details about our database of court donations

In our database of court donations, anyone can look up which organizations and associations have received over 170 million Euros from the German judiciary. This blogpost aims to explain the coding and technical aspects behind the web application. A link to the complete source code on Github is included at the end.

We at CORRECTIV believe that the development of a story isn’t completed with its publication. That’s why we make our work transparent, publish source codes, and release our data. We’re excited to see what else can be uncovered.

## Project course

First we had to gather all of the data. Our reporter Jonathan Sachse described the obstacles he faced in his blogpost last week (in German). After obtaining all relevant data sets from the various federal states, we organized them by state, year, and authority.

This is where the actual coding set in:

The data is available in PDF, Excel, and Word formats. The aim is to develop a single spreadsheet containing all payment transactions. A quick look through the source files revealed that there was no coherent data pattern. Even though some documents include much more information, the entire set could only be broken down by the name of the institution and the allocated sum.

## Conversion into CSV

In order to merge the data, it’s advisable to convert the individual sets into the same format. The CSV format (Comma Separated Values) is ideal for automatic processing: no formatting, no hidden or combined columns — a single spreadsheet with a clearly defined header. Our spreadsheet has to at least include the columns „name“ and „sum“. Additional columns are optional but should have the same label if they include the same things.

The actual conversion takes a lot of manual work. While Excel spreadsheets can often export simple CSV-data, PDFs usually have to be processed with OCR in order to make the font machine-readable. Afterwards they’re processed through a tool such as Tabula, which recognizes charts.

The resulting CSV sets build the foundation of our data. Mistakes or other problems in the data caused by the treatment with OCR can be corrected at this point. Since this has to be done by hand, it takes quite a bit of time.

## Consolidation and automatic cleansing

After the conversion process a lot of the data remains dirty: the name column often still includes continuos numbering, the address of the organization or other information. The sum column also includes all kinds of digits, punctuation marks, blank characters, and variations in currency (€, EUR, Euro,…).

A Python script (an application written in the programming language Python) reads all CSV data sets, forms an aggregate of column names, cleans known columns according to certain patterns (known phrases and worse), and rewrites all data as a large CSV file.

## Deduplication with Open Refine

Open Refine helps to clean tabulated data sets. The roughest mistakes were already removed by the Python script so that we only need Refine for the deduplication. Refine helps to avoid including the same organization more than once with different sums in our spreadsheet — instead, one sum per organization is the goal. For this we apply the „Cluster & Edit“ mode to the name column.

Open Refine offers several possibilities to merge entries. One of the most accurate features is „nearest neighbor“, which allows Open Refine to compare each individual entry to determine their resemblance. With over 44.000 entries, this takes some time. Therefore it’s advisable to use one of several possible „key collision“ methods. „Key collision“ builds a pattern out of each line, for example by looking at every third character, and combines the entries with matching patterns. Open Refine gives you the possibility to try a whole variety of „key collision“ functions. We played around with the different parameters and found a whole lot of great duplicates to combine.

But what is an actual duplicate and which entries only seem similar? That’s a matter of interpretation. Organizations in varying indexes may have the same name without actually being identical. Regional branches aren’t the same thing as their federal association. The keying function „metaphone3“, for example, merges all organizations beginning with the word „Förderverein“ (booster club), leading to a bunch of mistakes. The fingerprint function in Open Refine is better equipped to recognize a number of typing and OCR mistakes and even normalizes them into the right spelling.

Deduplication entails a number of human decisions. These can be extracted from Refine in the undo/redo mode as JSON-formatted commands and incorporated into the data processing pipeline. This makes the whole process transparent and reproducible. It also allows us to reproduce our decisions when working with new data sets or subsequent updates.

## Pipeline

The idea behind a data pipeline is to let data flow from its source to the database in automatic processing stages so that bugs in the source don’t have to be fixed manually. We built such a pipeline for this project: the individual CSV files are merged into a single document and cleansed. Saved Refine commands are applied to the entire CSV file before uploading the result to our final database.

## The web application

The goal of this project is to empower interested citizens to use our donation database. A web interface with search and filtering options as well as individual pages for each organization is a natural solution.

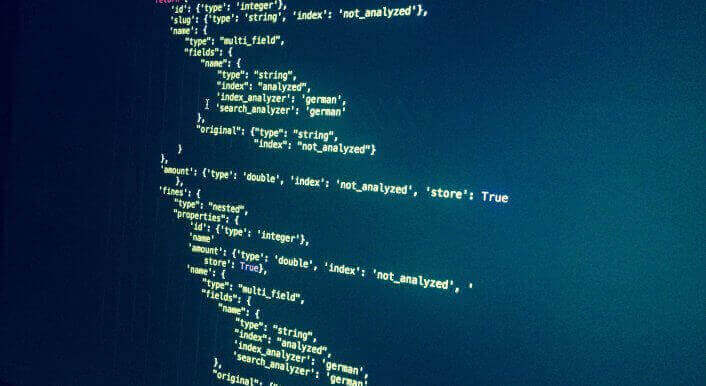

The web application is a Django project. While the data is gathered in a conventional database, the search is indexed in ElasticSearch. The application uses the official ElasticSearch Python Client and a ElasticSearch query with interlaced aggregators, filter queries, and sorting.

The complete source code of our web application can be found on our CORRECTIV GitHub account.